I Let an AI Audit My Home Assistant Setup — Here's What It Found

906 entities, 122 unavailable, and a Frigate upgrade I'd been putting off for months. I gave Claude Code a single open-ended prompt and let it loose on my Home Assistant config.

I have two Home Assistant installs. My main home — the one I've been tinkering with longest — runs on a 2016 Mac Mini that is starting to show its age, hosting a config that has grown into something genuinely unwieldy. My second property has a newer, smaller install. Still complex enough to be interesting, but young enough that I haven't had years to accumulate chaos.

It's still managed to accumulate some chaos.

906 entities across 36 domains, 43 automations, 32 HACS components, and enough Lovelace cards to wallpaper a small room. And because I don't touch it as regularly as my main home, the organic growth problem is worse: I'd stopped seeing the mess entirely. You know roughly what's working. You have a vague awareness of the things that aren't. But a proper audit? That's always tomorrow's job.

I wasn't quite brave enough to run this experiment on the main house first. The second property felt like a safer place to start, with less to lose if something went sideways.

It didn't go sideways. But I'm still building up to the main house.

Then I started using Claude Code.

What is Claude Code?

Claude Code is Anthropic's AI coding assistant that runs in your terminal. Unlike a chat interface, it can actually read files, understand a codebase as a whole, and make changes — not just suggest them. I came across it via Dan Malone's site, where he's been writing about practical AI tooling in a way that actually makes sense. I'd been using it for software projects, and it occurred to me: my Home Assistant config is just a collection of YAML files. Why not point it at those?

Setting It Up

The first thing I tried was the official Home Assistant MCP server. It works, but the functionality is quite constrained — it exposes a limited surface area of your HA instance and isn't really designed for deep config analysis.

What worked much better was ha-mcp — a community MCP server you can find on GitHub. Setup is straightforward: give it your HA URL and a long-lived access token (generated in your HA profile settings), and Claude Code can connect directly to your instance. From there, the audit got started quickly.

The prompt I used to kick things off was deliberately open-ended:

"Carry out a full audit of my Home Assistant using ha-mcp. Tell me what issues, risks, opportunities and failures you can find, and highlight them for me."

That's it. No hand-holding, no specific areas to look at. What came back surprised me.

The Numbers

Claude Code worked through my config and produced a structured audit report. The headline figures:

- 906 entities across 36 domains

- 9 areas (across 3 floors)

- 43 automations, 2 scripts

- 6 add-ons, 32 HACS components (all up to date)

- 122 unavailable entities — 13.5% of my setup

That last number stung a bit. More than one in eight entities just... not working. Some I knew about. Most I'd quietly stopped thinking about.

What It Found

The BMW Problem

The biggest offender was my BMW iX M60 integration. I use BimmerData Streamline via HACS, and it had been in setup_retry state for a while. I knew it wasn't working. I didn't know why.

Claude Code dug into the integration logs and pinpointed the cause: BMW had updated their API, and the BimmerData Streamline integration simply hadn't kept up. Around 100 entities — charging state, battery data, range, tyre pressures, GPS location, door states — were all dark as a result.

The audit gave me enough to go on. I dug around and found a newer integration that supports BMW's current API, and migrated to that. All those entities came back. It was the audit that pointed me in the right direction — without it, I'd probably still be assuming it was a credentials problem and ignoring it.

The Ghost in the Machine

Among the other unavailable items, Claude Code flagged something I'd completely forgotten about: script.script. An unnamed, empty script, sitting there doing nothing. The kind of thing you create by accident, forget to delete, and then trip over every time you try to do something with scripts. Gone.

Fiona's iPhone

Nine sensors associated with my wife Fiona's iPhone were unavailable. The audit flagged them clearly, and digging in revealed the actual cause: HA still had a record of her old phone. She'd upgraded, the companion app was running on the new device, but the old device entry was still sitting there confusing things. A quick tidy-up in the People & Devices settings resolved it.

Simple when you know what to look for. Easy to miss when you're not actively looking — and this one had apparently been wrong for a while.

The Stale Add-On

"Get HACS" — the bootstrap add-on you install to get HACS itself — was still installed and stopped. Once HACS is running, you don't need it anymore. It had just been sitting there. Removed.

What Was Actually in Good Shape

It wasn't all bad news. Claude Code noted what was working well, which was worth hearing:

- HA Core was current (2026.2.3)

- All 32 HACS components were up to date

- Integration coverage was solid: Octopus Energy, myenergi Zappi, Drayton Wiser, Frigate NVR, Proxmox VE, LLM Vision

- Matter Server running (good for future-proofing)

- Presence detection working for all four family members

It's reassuring to have the things that matter confirmed as healthy, rather than just assuming.

What Got Fixed — Including One That Scared Me

The audit flagged a Frigate update: I was on 0.14.9, and 0.16.4 was available (actually 0.17.0 - but not going there yet). On paper, straightforward. In practice, not quite.

Frigate runs in an LXC container on Proxmox, and the version jump was significant enough that the container itself needed to be rebuilt. The process: back up the existing config, tear down the container, create a new one, restore the config, and debug whatever didn't come back cleanly. I know my way around Proxmox reasonably well, but "reasonably well" and "won't make a mistake under pressure" are different things.

This is where handing things to Claude Code required a genuine leap of faith. It wasn't just reading files anymore — it was making changes at the host level. I took a breath, watched what it was doing, and went with it.

It worked. And more than that, there were issues during the process that I wouldn't have resolved myself — config nuances, permission errors, things that would have had me trawling forums for an hour. Claude Code walked me through each one as it came up. The whole thing was nerve-wracking in the moment and genuinely impressive in retrospect.

The BMW and Fiona situations I could probably have sorted on my own given enough time. The Frigate upgrade I'm not sure I'd have tackled solo, or at least not without putting it off for another few months.

Building a Dashboard

The part that genuinely impressed me was what happened next. Rather than ask for something specific, I gave Claude Code a deliberately open brief: look at all the sensors and data I have, and suggest a dashboard layout that you think would be useful. It also had access to all my existing dashboard YAML — so it could see the card libraries I was using, my naming conventions, the popup style I'd established elsewhere — and it built something that felt consistent with what I had, while being entirely new.

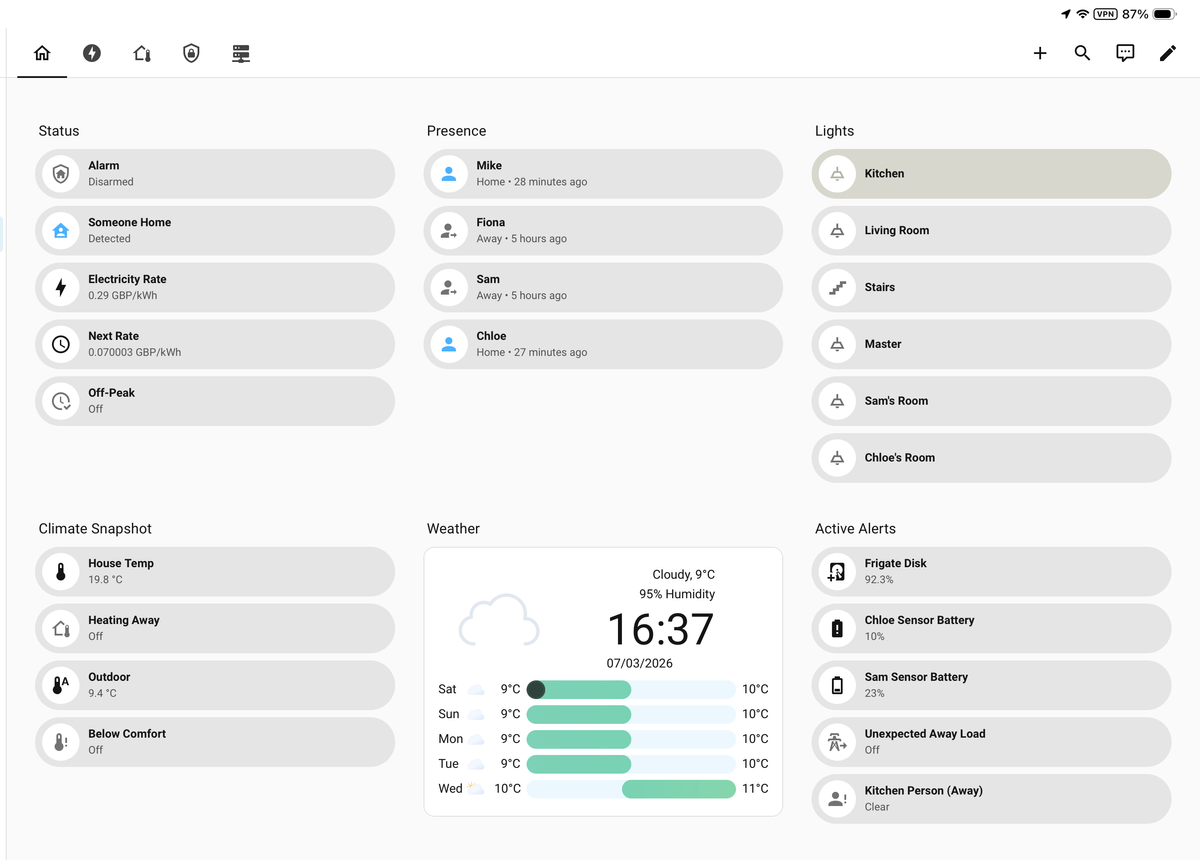

What struck me was how sensible the information architecture is:

- Status — alarm state, occupancy, live electricity rate, next tariff rate, off-peak status. The Octopus Energy data presented exactly where you'd want it.

- Presence — all four family members, when they were last seen, home or away at a glance.

- Lights — room-by-room, clean and tappable.

- Climate Snapshot — house temperature, heating mode, outdoor temp, comfort status.

- Weather — current conditions plus a 5-day forecast with Wiser-style bar chart visualisation.

- Active Alerts — Frigate disk usage, low battery warnings on companion app sensors, unexpected load states. Things you actually need to know about.

None of that layout was directed by me. Claude Code looked at what data was available and made editorial decisions about what belonged together and how to organise it. The tab structure across the top (there are multiple views beyond this home screen) followed the same logic.

It's not perfect — there were a few tweaks needed after the fact. But as a starting point generated from a single open-ended request, it's genuinely better than dashboards I've built myself after hours of iteration.

The other thing worth noting: it wrote working YAML, directly, accounting for the specific cards I had installed. Not suggestions to copy and paste into a chat window. Actual files.

Is This Actually Useful?

Honestly: yes, more than I expected.

The audit itself took Claude Code a matter of minutes. Doing the same thing manually — cross-referencing logs, tracing unavailable entities back to their source integrations, checking HACS versions — would have taken me an evening, and I'd have missed things. I'd known the BMW integration wasn't working for weeks but had no idea it was an API change issue.

The dashboard work is where it gets genuinely interesting. YAML is not difficult, but writing Lovelace dashboards is tedious. Having an assistant that can take a plain English brief and produce working card configuration — accounting for the specific cards you have installed, your entity naming, your areas — removes a lot of friction.

There are limits. Claude Code can read your config and reason about it, but it can't see your actual UI, doesn't know what your home looks like, and occasionally produces something that needs tweaking. It's a tool, not a replacement for knowing your own system.

But as a way to audit, fix, and improve a Home Assistant setup that's grown too large to hold in your head? It's become part of how I work with HA now.

If you've used Claude Code or any other AI tooling with Home Assistant, I'd be curious what you found. Drop a comment below.